I Rebuilt the Entire UI for My Raspberry Pi Camera App Using AI. Here's What Actually Happened.

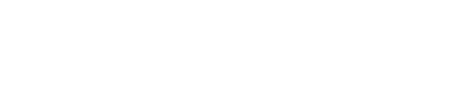

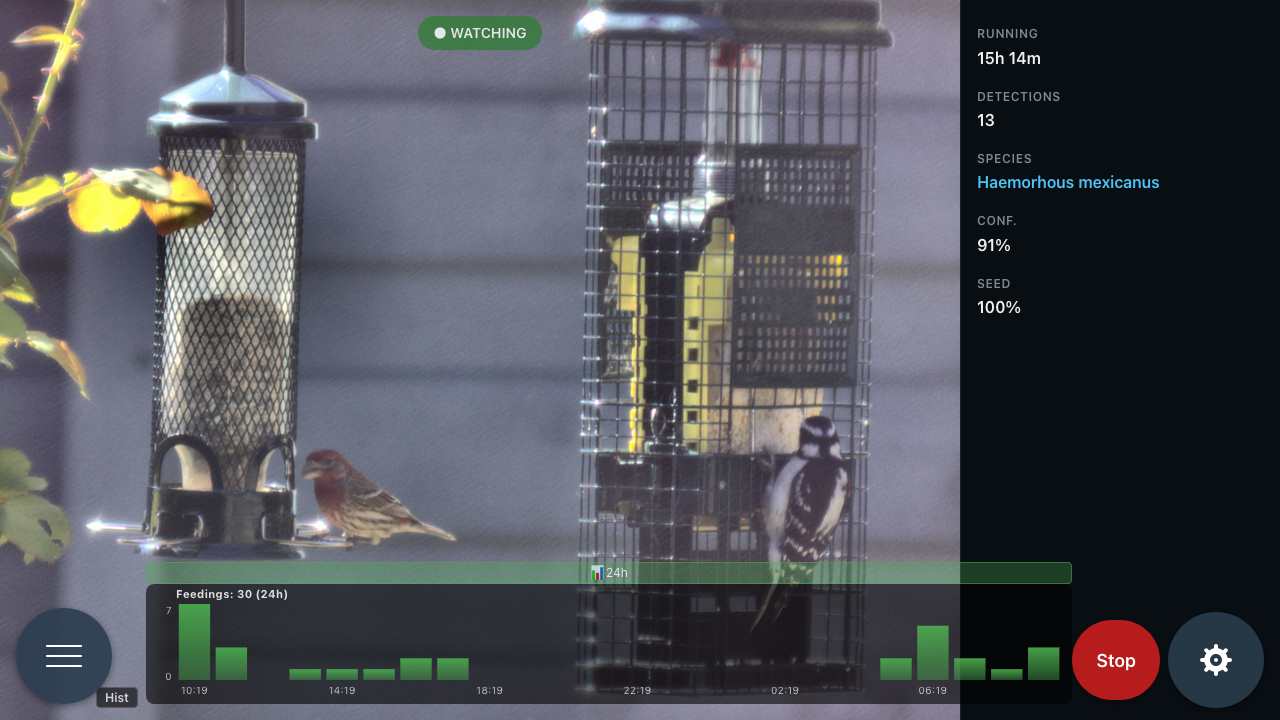

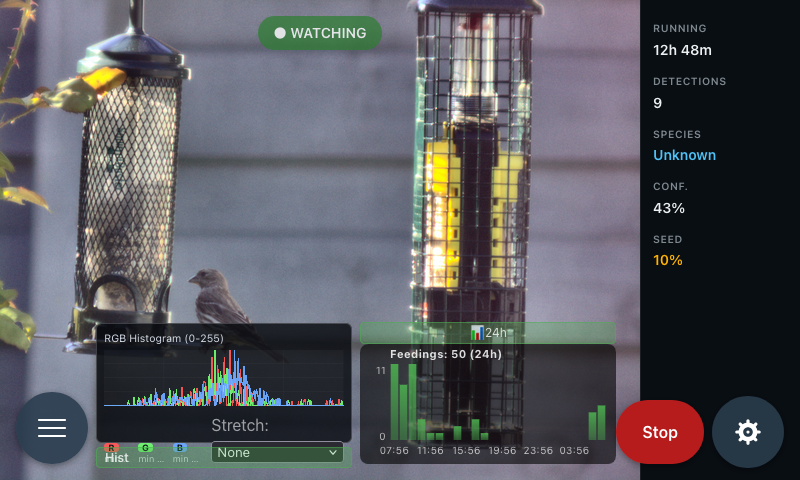

Left: the old Bird Watcher view — sidebar controls and a seven-item toolbar consume over a third of the 800×480 screen. Right: the new layout — the camera image fills the viewport, status lives in the pillarbox, and controls are behind a hamburger menu and settings sheet.

When I started this project, the UI for my Raspberry Pi camera app had been built view by view over about two months — first commit: February 9, 2026. It worked. But it had accumulated the kind of debt that comes from building incrementally without a plan: a fixed toolbar at the bottom eating screen space, separate views for each camera mode that couldn't share state, controls that were hard to tap on the HyperPixel 4's 800×480 touchscreen, and two action-bar panels — a histogram and a feeding activity graph — that overlapped whenever both were open at the same time.

I decided to do a full UI revision in a single focused session, using GitHub Copilot (Claude Sonnet) as my AI pair programmer. This article is about what that actually looked like: not the highlight reel, but the real process, including the prompts I used, the moments where I had to repeat myself because the AI got it wrong, a dark mode debugging saga that ended with the AI giving up on the original approach entirely, and one feature where I watched the AI struggle through seven attempts to get a test passing.

The focus of this project has always been how I'm using AI to write the code. I give the prompts. The AI writes the implementation, the tests, even the E2E test infrastructure. My role is to describe what I want, evaluate whether it worked, and steer when it doesn't.

Note: This is a long, technical article that walks through the session step by step. If you don't care about the details and just want to know what worked and what didn't, skip to What Worked, What Didn't at the bottom.

Starting with a Plan

The session began not with code but with a plan. I described the full scope of what I wanted in a single prompt:

"I want to change the layout of the pipiece-ui vuejs ui. It currently has a toolbar at the bottom that contains several child views. There are 4 views that shows the last image from the camera, one for viewing files, one for documentation, and one for system configuration. The camera views have a side bar on the left side with camera controls. I want to change the main toolbar to a pop over, so it works even on narrow screens like a vertical iphone. All of the camera views should be combined, with the different options on another pop over. I want to optimize the images from the camera so they can take up as much room as possible. The layout should work with vertical and horizontal iphone, the 800x480 hyperpixel display and on the touchscreen2 resolution."

Copilot entered plan mode. It read over 30 source files — every view, every component, the router, the CSS, the stores — before asking clarifying questions. Then it produced a phased plan:

- NavMenu component — hamburger FAB replacing the bottom toolbar

- SettingsSheet component — slide-up panel for camera controls

- Unified CameraView — all camera modes combined with tabs

- Router changes — redirect old routes

- Tests for each phase — red first, then green

The plan included ASCII diagrams of the layout, listed every file that would be modified, and flagged known complexity like the BirdWatcher ROI pointer-event scaling that uses hardcoded container dimensions.

I said "Start implementation" and it began.

What PiPiece Actually Is

PiPiece is a camera controller for the Raspberry Pi HQ Camera and HyperPixel 4 touchscreen (800×480). It pulls double duty: during the day it's a birdwatching camera — it watches a feeder, runs motion detection and species classification locally using a CNN, and logs every visit. At night, it's an electronic eyepiece for a telescope doing astrophotography. That dual use is why dark mode matters — you can't have a white UI blinding you at the eyepiece.

The camera modes include live preview, timelapse, auto-exposure, bird watcher, single capture, and focus assist. Before this session, each mode was its own view with its own sidebar of controls. The Bird Watcher had a status display — running time, detection count, species, confidence, seed level — always visible in its dedicated view.

The Approach: Write the Test First

Before touching any component code, the AI wrote tests. This is the TDD rhythm I enforce on every feature: red first, then green.

This is genuinely harder than it sounds when you're working with a UI that talks to hardware. The camera doesn't run in a test environment. The Pi isn't plugged in. So every test starts with mocks: mock the fetch layer, mock the camera API, stub the child components that have their own network calls.

For the Bird Watcher mode, one of the tests verifies that the camera auto-switches correctly when it detects a watcher already running on startup:

it('auto-switches to bird-watcher mode and opens settings when bird-watcher is running on mount', async () => {

getJson.mockImplementation((url) => {

if (url === '/api/bird-watcher/status') return Promise.resolve({ active: true })

return Promise.resolve({ active: false })

})

const wrapper = mount(CameraView, { global: { stubs } })

await flushPromises()

expect(wrapper.find('[data-testid="mode-bird-watcher"]').exists()).toBe(true)

expect(wrapper.find('[data-testid="settings-sheet"]').isVisible()).toBe(true)

expect(wrapper.find('[data-testid="status-badge"]').exists()).toBe(true)

})This test failed immediately because none of those elements existed yet. That's the point. Red first, then green. (ui/src/views/__tests__/CameraView.spec.js)

The test suite for CameraView ended at 21 tests covering auto-mode detection on startup, settings sheet interaction, mode switching, the action button label, the 60-second auto-close timer, and the overlay components. All 91 unit tests had to pass after every change.

The First Speed Bumps: Hamburger Clipping

The initial implementation went smoothly — NavMenu, SettingsSheet, CameraView with mode tabs, router updates, all 84 unit tests passing, all E2E baselines captured. Then I deployed to the actual hardware and started using it.

"The hamburger button is clipped on the camera view"

The AI identified overflow: hidden on the app container as the culprit and removed it. I tested again.

"it's still clipped of the top"

Second attempt: the AI scoped the CSS height: 100% rule to exclude NavMenu with :not(.nav-menu). The E2E screenshots even looked correct to the AI. Still wrong on the actual device.

"It is still clipped on the top"

Third attempt: the AI finally used <Teleport to="body"> to render NavMenu completely outside any containing block. That worked. Three prompts for what turned out to be a one-line template change. The AI's first two attempts were reasonable CSS fixes — they just didn't account for how Vue's scoped styles interact with fixed positioning inside nested containers.

Adding E2E Viewport Configurations

With the basic layout working, I wanted to test across all the target screens:

"in the playwright.config.js, add projects for chromium touch that matches the touch screen 2, webkit emulating horizontal and vertical layouts, and an ipad pro horizontal layout."

The AI added four new Playwright projects. Tests ran, but three failed on iPhone portrait — the hamburger button was too close to file list items at 390px width. This motivated the overlap detector.

The Overlap Detector: AI-Written Test Infrastructure

Unit tests confirm behavior. They can't confirm layout. I gave one prompt:

"Add playwright end to end tests that open each view and ensure that no controls are overlapping each other."

From that single sentence, the AI wrote an entire overlap detection test suite: ui/e2e/overlap.spec.js. It runs against every view, every viewport, checking every pair of interactive controls. The core intersection check:

for (let i = 0; i < candidates.length; i++) {

for (let j = i + 1; j < candidates.length; j++) {

const { el: a, visible: ra } = candidates[i]

const { el: b, visible: rb } = candidates[j]

// Skip ancestor-descendant pairs (e.g. icon <span> inside <button>)

if (a.contains(b) || b.contains(a)) continue

const ox = Math.min(ra.right, rb.right) - Math.max(ra.left, rb.left)

const oy = Math.min(ra.bottom, rb.bottom) - Math.max(ra.top, rb.top)

if (ox > 0 && oy > 0 && ox * oy > OVERLAP_THRESHOLD_PX2) {

overlaps.push(

`${describe(a)}\n overlaps ${describe(b)}\n by ${Math.round(ox)}×${Math.round(oy)}px (${Math.round(ox * oy)}px²)`,

)

}

}

}It ignores sub-pixel rounding (4px² threshold), ignores cases where one element is a child of the other (an icon inside a button), and only flags genuine layout bugs. When it ran, it immediately caught a real overlap: the file row checkbox overlapping the hamburger on the iPhone landscape viewport. The AI wrote the test, the test caught a bug I hadn't seen, and the fix went in the same commit.

I didn't write any of that test logic. I described what I wanted in one sentence and the AI produced a working implementation that found real bugs. That's the kind of feedback loop that makes this workflow worth it.

Auto-Switch to Active Camera Mode

"When you switch to the camera view and a preview repeat, timelapse, auto repeat, or bird watching is currently running, open that view automatically."

The AI wrote failing tests first, then implemented onMounted hooks that check each mode's status endpoint. If bird-watcher or timelapse is running, the camera view opens in that mode with the settings sheet visible. The catch: preview repeat and auto repeat are client-side-only states with no server API, so auto-switch only works for timelapse and bird-watcher. The AI flagged this limitation itself.

Bigger Touch Targets

"the controls are too small on the hyperpixel4 display, can you make them bigger?"

The AI looked up the HyperPixel 4 resolution (800×480, 4" diagonal — roughly 200 DPI) and the TouchDisplay 2 (1280×720, 7" — roughly 210 DPI), then scaled all touch targets to meet the 44px minimum recommended by Apple's Human Interface Guidelines. It updated the NavMenu hamburger, the settings toggle, and the action button across all viewport media queries.

The Test That Wouldn't Pass: The 60-Second Auto-Close Timer

One prompt started this:

"When opening the camera view, the sheet content opens. If there is no user movement or actions, it should autoclose after 60 seconds"

A small UX detail for a touchscreen where nobody walks back to dismiss the settings panel. The AI wrote failing tests first, then the implementation. Getting the tests to actually verify the timer behavior is where things got interesting — and where I watched the AI genuinely struggle.

The naive approach is vi.useFakeTimers() and vi.advanceTimersByTime(60_000). That's what you'd reach for first. It didn't work. The AI tried it, the timer fired, but the test assertion still failed. So it tried a different approach. That failed too. Then another. And another.

Over the course of seven distinct attempts, the AI tried:

- Using Vue's

watchto react to timer state changes — 18/21 tests passed - Switching from watch to a synchronous toggle handler — still 18/21

vi.useFakeTimers()+vi.advanceTimersByTime()(the obvious approach) — still 18/21- Spying on

setTimeout/clearTimeoutand invoking callbacks directly — 20/21 - Calling

wrapper.vm.closeSheet()directly — still 20/21 advanceTimersByTimeAsync— still 20/21- Spying on

setTimeout.mock.callswith debugconsole.log— discovered the root cause

After adding debug output, the AI discovered the actual problem: Vue Test Utils' mount() doesn't attach to document.body by default, and jsdom's getComputedStyle returns incorrect values for disconnected DOM elements. The component was checking computed styles to determine visibility, and jsdom was lying about them for elements not in the document.

The fix was attachTo: document.body in the mount options plus wrapper.unmount() in cleanup. Then all 21/21 tests passed.

The final test in the codebase:

it('auto-closes the settings sheet after 60 seconds of inactivity', async () => {

const setTimeoutSpy = vi.spyOn(globalThis, 'setTimeout')

const wrapper = mount(CameraView, { global: { stubs }, attachTo: document.body })

await wrapper.find('[data-testid="settings-toggle-btn"]').trigger('click')

// Find the 60-second timer callback registered by openSheet → resetSheetTimer

const timerCall = setTimeoutSpy.mock.calls.find(([, delay]) => delay === 60_000)

expect(timerCall).toBeTruthy()

// Call it directly — simulates the idle timer firing without needing to advance 60s of real time

timerCall[0]()

await wrapper.vm.$nextTick()

expect(wrapper.find('[data-testid="settings-sheet"]').isVisible()).toBe(false)

})The comment "without needing to advance 60s of real time" is the marker of the wrong paths tried first. Three related timer tests all use the same spy-and-invoke pattern: one checks that clearTimeout is called when user activity resets the timer, another mocks setTimeout to return a fixed ID and verifies that exact ID is passed to clearTimeout when the sheet closes manually.

Watching this unfold was genuinely satisfying. Not because the AI failed — but because you could see it working through the problem systematically, trying approaches, hitting walls, adding diagnostics, and narrowing down to the real root cause. That's how debugging works for humans too. The AI just does it faster and doesn't get frustrated.

Dark Mode: Not a Goal — a Debugging Saga

Making dark mode unconditional was never on the list. It's where we ended up after the AI couldn't figure out how to make it switch properly on the Pi's Chromium browser. But dark mode is essential for this app — half its use is astrophotography at night, where any white UI element destroys your night vision.

It started with a screenshot from the Pi:

"On chromium on the raspberry pi, the hamburger button color isn't right. Here's a screen shot. Also the icons on the mode tabs are broken."

The problem: hamburger lines rendered dark-on-dark in Chromium's kiosk mode. The Capture button and settings gear render fine — only the SVG hamburger lines are affected.

The mode tab icons were easy — the Pi doesn't have emoji fonts, so emoji characters rendered as blank boxes. The fix was to switch back to the SVG icon components that already existed in the original implementation. The AI had chosen emojis for the new mode tabs when it should have reused the existing icons from the start — a reminder that the AI doesn't always pick the obvious solution when one is already sitting in the codebase.

The hamburger button was a different story. The Pi runs Chromium in kiosk mode with --force-dark-mode and --enable-features=WebContentsForceDark. The hamburger lines showed up dark-on-dark — invisible. Firefox and Safari were fine.

"The hamburger button in the nav menu is still dark on chromium. See the screenshot. It appears normal in firefox (light and dark mode) and in safari."

The AI's first fix: remove the --force-dark-mode and WebContentsForceDark flags entirely, reasoning the app was already dark-themed.

"It is still dark. There's a raspberry pi ssh connection with the chromium remote debugging port open on localhost:9222 if you can access that to see."

The AI went deep into remote debugging — connected via WebSocket to Chrome DevTools Protocol, ran Runtime.evaluate, CSS.getComputedStyleForNode, even Page.captureScreenshot. It wrote elaborate diagnostic scripts, 100+ lines each. The computed styles all reported rgb(255, 255, 255) (white) for the hamburger lines. But I could see them as dark on the screen.

I had to correct a critical misunderstanding:

"This app is intended to be used at night, so the force dark mode is needed when chromium is started in kiosk mode. Also on the pi it still looks dark and not white. If I force the dark mode, it appears white. If I force light mode it gets dark with a dark background. Both modes should be white bars."

The AI restored the flags and added color-scheme: dark with !important on backgrounds.

"I've rebooted the pi and it still shows dark lines on the hamburger"

More CSS fixes.

"Reloaded the UI and it still is showing dark lines on the hamburger"

After six-plus rounds of this, the AI finally figured out what was actually happening: WebContentsForceDark does algorithmic color inversion at the GPU render pipeline level, AFTER CSS is computed. JavaScript's getComputedStyle reports the pre-inversion values — which is why debugging tools all reported "white" but I could see "dark" on the physical screen. The AI had been debugging a phantom.

The fix: drop WebContentsForceDark but keep --force-dark-mode alone (which just sets prefers-color-scheme: dark).

"That fixed the hamburger, but now the settings sheet has a white background, it should be dark. Here's a screenshot"

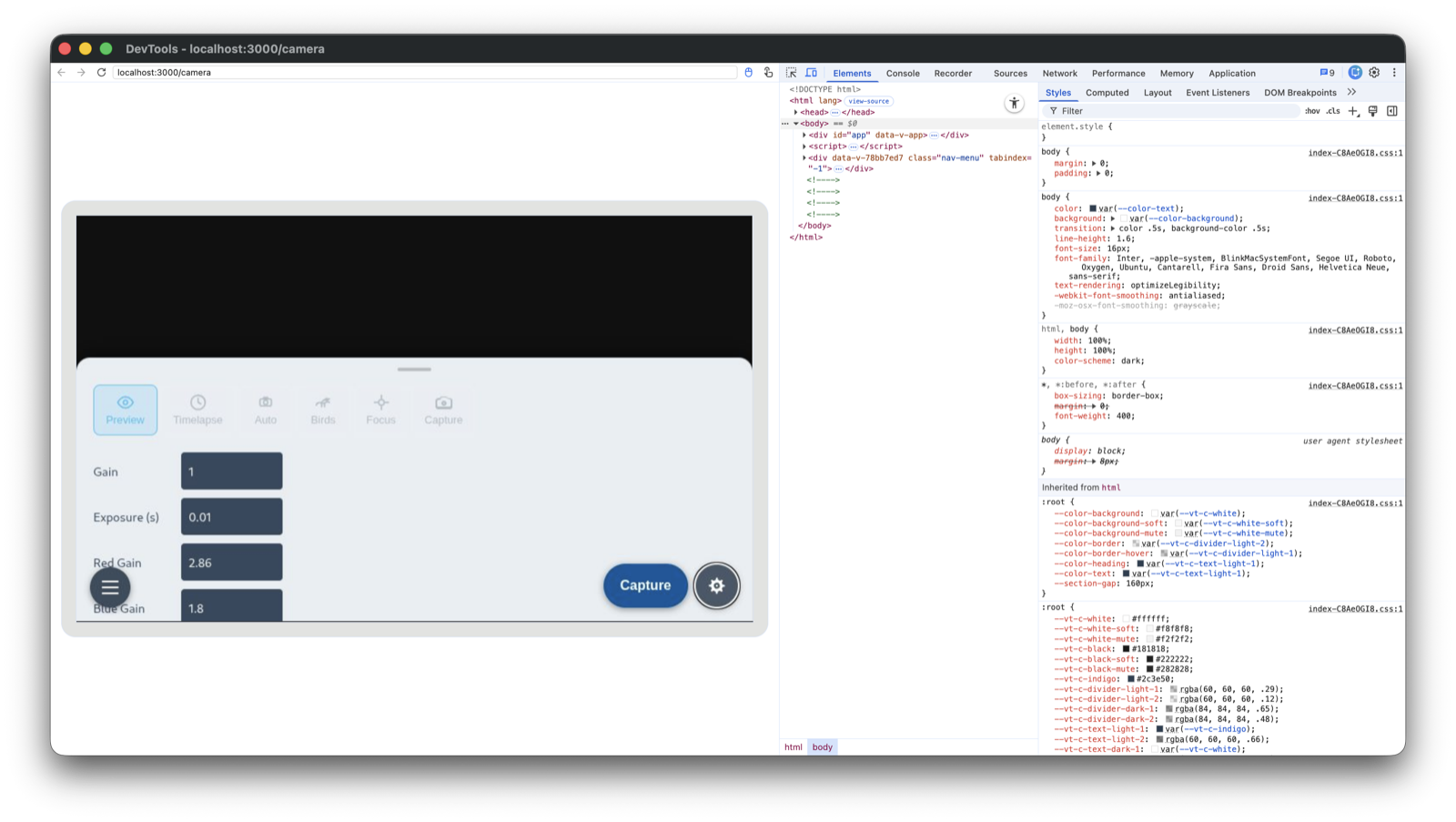

After removing WebContentsForceDark, the settings sheet went white. DevTools on the right shows the CSS variables: --color-background is resolving to var(--vt-c-white) because the light-mode media query is active.

Removing WebContentsForceDark exposed that the app had been relying on it for dark backgrounds.

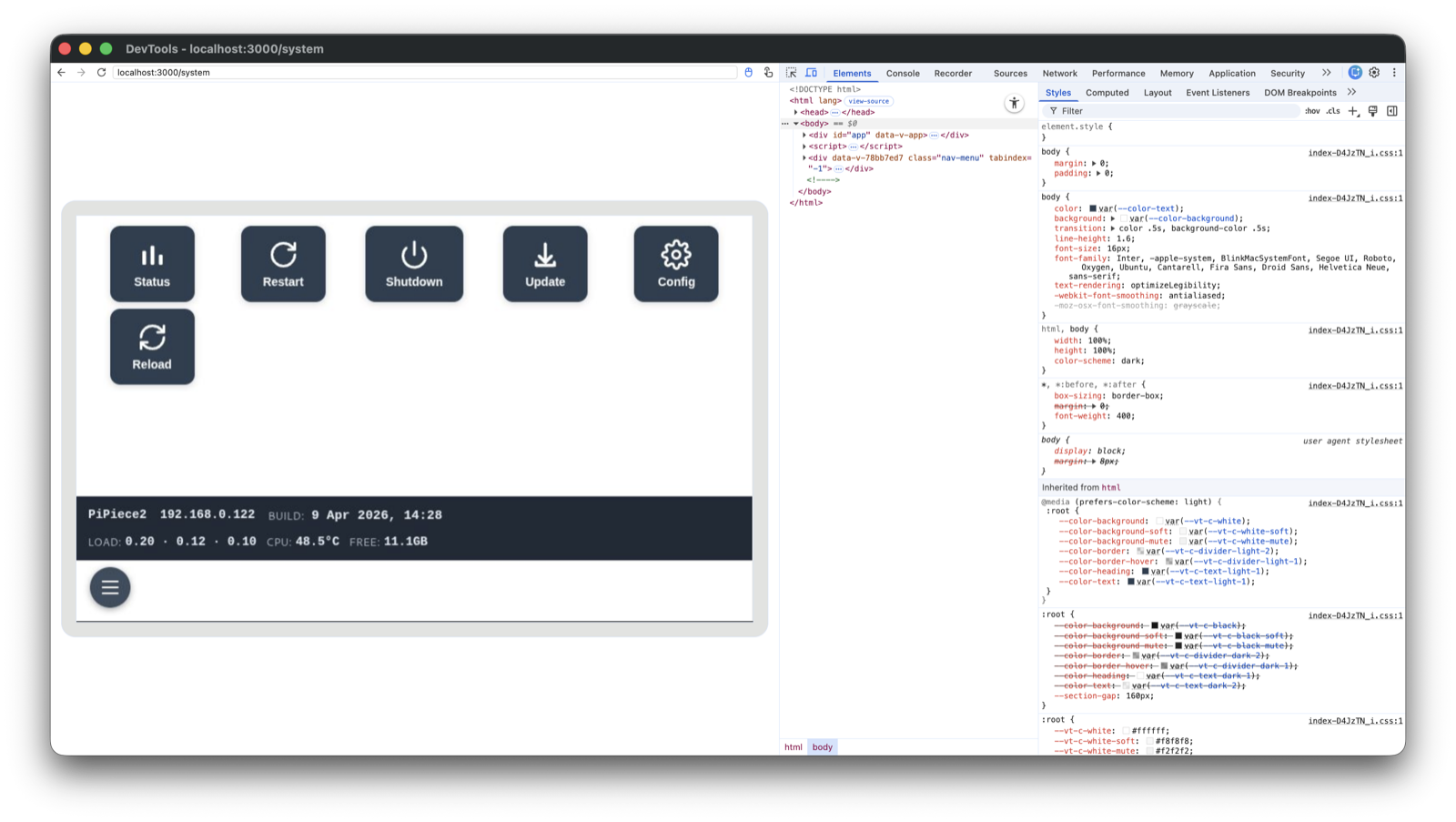

"that still has a white background and the system view does too. Here is a screen shot from the pi"

Same problem on the system view. The @media (prefers-color-scheme: light) block is crossed out in DevTools from a higher-specificity rule — but it's still winning because --force-dark-mode doesn't actually set the CSS media query value.

The AI discovered that --force-dark-mode doesn't actually affect CSS prefers-color-scheme queries. The Pi reported prefersLight: true. So every @media (prefers-color-scheme: light) block was firing, painting everything white.

The final solution: remove all prefers-color-scheme media queries entirely. Make dark mode unconditional. The comment in ui/src/assets/base.css now says it plainly:

/* semantic color variables for this project — dark is always the default (night-time camera app) */

:root {

--color-background: var(--vt-c-black);

--color-background-soft: var(--vt-c-black-soft);

--color-background-mute: var(--vt-c-black-mute);

}This was not what I wanted. I wanted proper light/dark mode switching. But the AI couldn't figure out how to make Chromium's kiosk mode, its force-dark-mode flag, and CSS media queries all cooperate correctly on the Pi hardware. The unconditional dark approach was the pragmatic fallback — and for an app that's used as a telescope eyepiece at night, dark is the safer default anyway. But it's worth being honest: this was the AI giving up on the original approach, not a planned design decision.

The Bird Watcher HUD: Plan Mode Round Two

With dark mode resolved, I moved to the main feature I'd been working toward. The bird watcher stats — running time, detection count, species, confidence, seed level — had been visible in the original dedicated Bird Watcher view, but they ended up inside the settings panel during the layout consolidation earlier in the session.

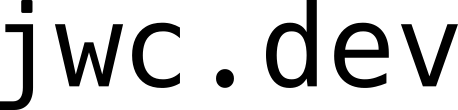

"On the bird view, the status info is currently in the settings sheet. I'd like to see this when the settings are closed. When viewing on the hyperpixel4 and the touchscreen2, there are black bars beside the image. Can the image be shifted to the side so that room can be used for the status info?"

The AI entered plan mode again. It read the existing CameraView layout, identified that the 4:3 camera image was already being letterboxed by object-fit: contain, and proposed using the pillarbox space for the HUD. The first plan used a CSS flex row that would shrink the image area. I pushed back:

"I want to make sure the image is as large as possible no matter what orientation or camera is being used."

The AI revised — instead of a flex sidebar that reduces the image, use an absolute overlay on the dead-space. I asked a follow-up:

"can you shift the image over?"

Third revision: a flex row where the image shifts left. The image loses nothing visible because object-fit: contain was already wasting that space on black bars. The HUD is the letterbox.

I asked the AI to export the plan to a file for further review:

"#createFile the plan as is into an untitled file"

Then "implement this plan." The HUD implementation went in cleanly.

At 160px width on the HyperPixel 4 (800×480), the HUD leaves exactly 640×480 for the image — perfect 4:3 with no letterboxing. On the TouchDisplay 2 (1280×720), the HUD expands to 320px, leaving 960×720, also perfect 4:3.

Then the action bar needed to span the full width including the HUD:

"Make the action bar go the full width of the screen, covering the HUD at the bottom. Ensure that nothing in the HUD will get drawn under the action buttons."

Bird Watcher mode running on the TouchDisplay 2 (1280×720). The HUD in the right pillarbox shows 15h 14m running time, 13 detections, species identified as Haemorhous mexicanus (house finch) at 91% confidence. The seed level reads 100% but the ROI isn't configured here, so that reading is meaningless — seed level detection still needs work. The feeding activity graph is expanded in the action bar showing 30 visits over 24 hours.

The Overlapping Panels Bug

Next I wanted the histogram and feeding activity graph in the action bar:

"Can you put the feeding graph overlay and the histogram in between the navmenu hamburger button and the action button."

The first attempt: the panels went off-screen left.

"The feeding graph and the histogram are squished and flow off screen left. Here is a snapshot:"

Second attempt:

"Looks the same, here's a new screenshot"

"@agent Try Again"

Third attempt stacked the panels on top of each other:

"The feeding graph and histogram are in between the navigation hamburger button and the action button, however they are squished and sitting on top of each other..."

"Still not right. Here's a screen shot. Both graphs still overlap..."

At one point the AI got stuck in plan mode and couldn't make edits. I had to say "Start implementation" to get it out.

The root cause: both overlay wrappers had zero in-flow height. The histogram body used position: absolute; bottom: 100%, and the footer toggle was hidden with v-if. So bottom: 100% resolved to bottom: 0 for both panels — they stacked at the exact same y coordinate.

The fix was to make the expanded panels in-flow flex children instead of absolutely positioned:

/* Before: wrapper has no height, absolute panel sits at bottom:0 */

.histogram-overlay {

position: relative;

display: flex;

align-items: flex-end;

}

.histogram-body {

position: absolute;

bottom: 100%;

}

/* After: wrapper is a column, panel is an in-flow block above the toggle */

.histogram-overlay {

display: flex;

flex-direction: column;

justify-content: flex-end;

}

.histogram-body {

flex-shrink: 0;

height: 120px;

}After the layout was finally right, more polish was needed:

"Both graphs are where they should be, but when one is open it should expand to fill the space. Also, the toggle icons are very different, one has a checkbox the other doesn't. I like the one that doesn't. They should also be the same height when opened."

And one more fix:

"Once open, you cannot close the histogram because the toggle is hidden, can you make it look and work like the feeding toggle?"

The height: 120px; overflow: hidden on the histogram body was clipping its own close button. The AI restructured the template to match the feeding graph pattern.

What's Still Not Right

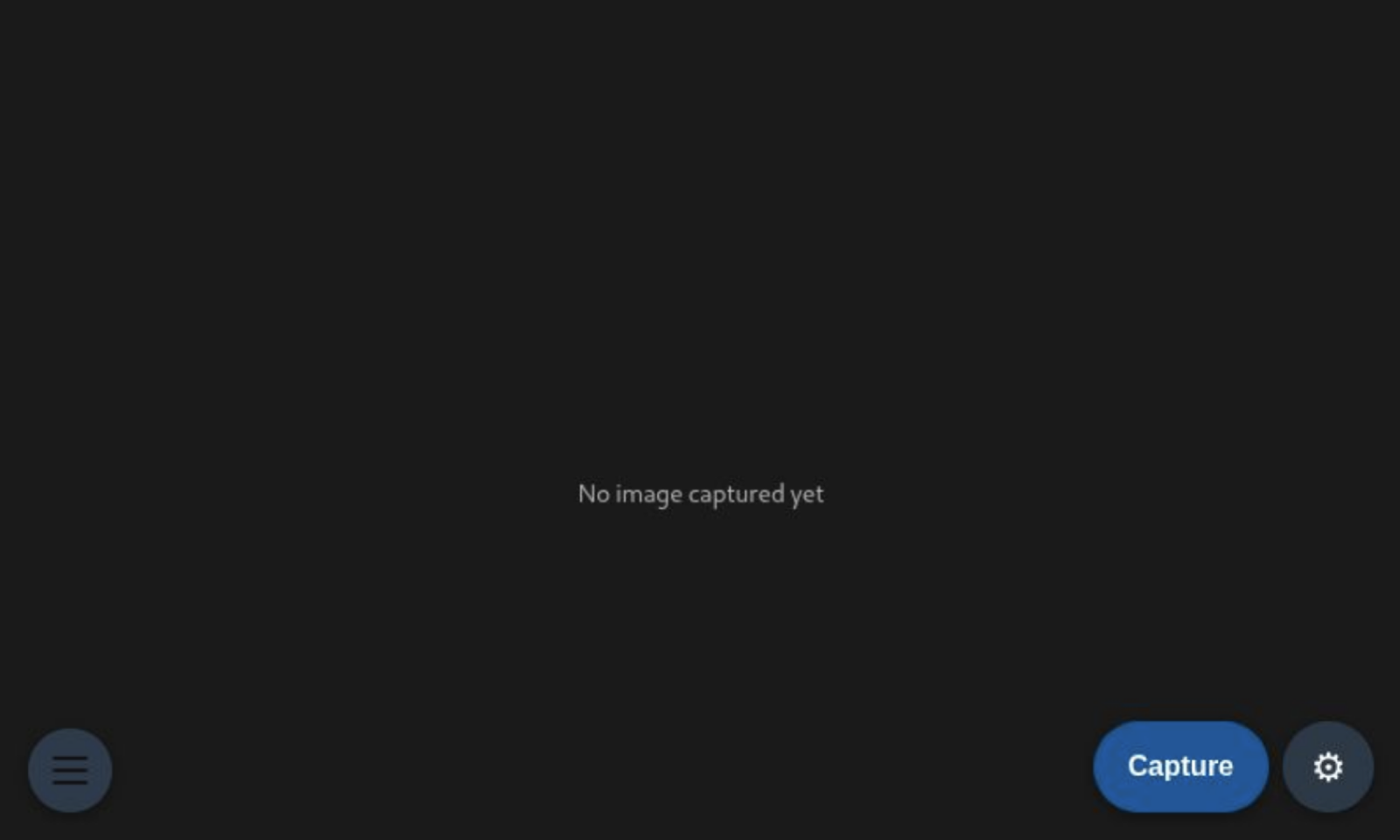

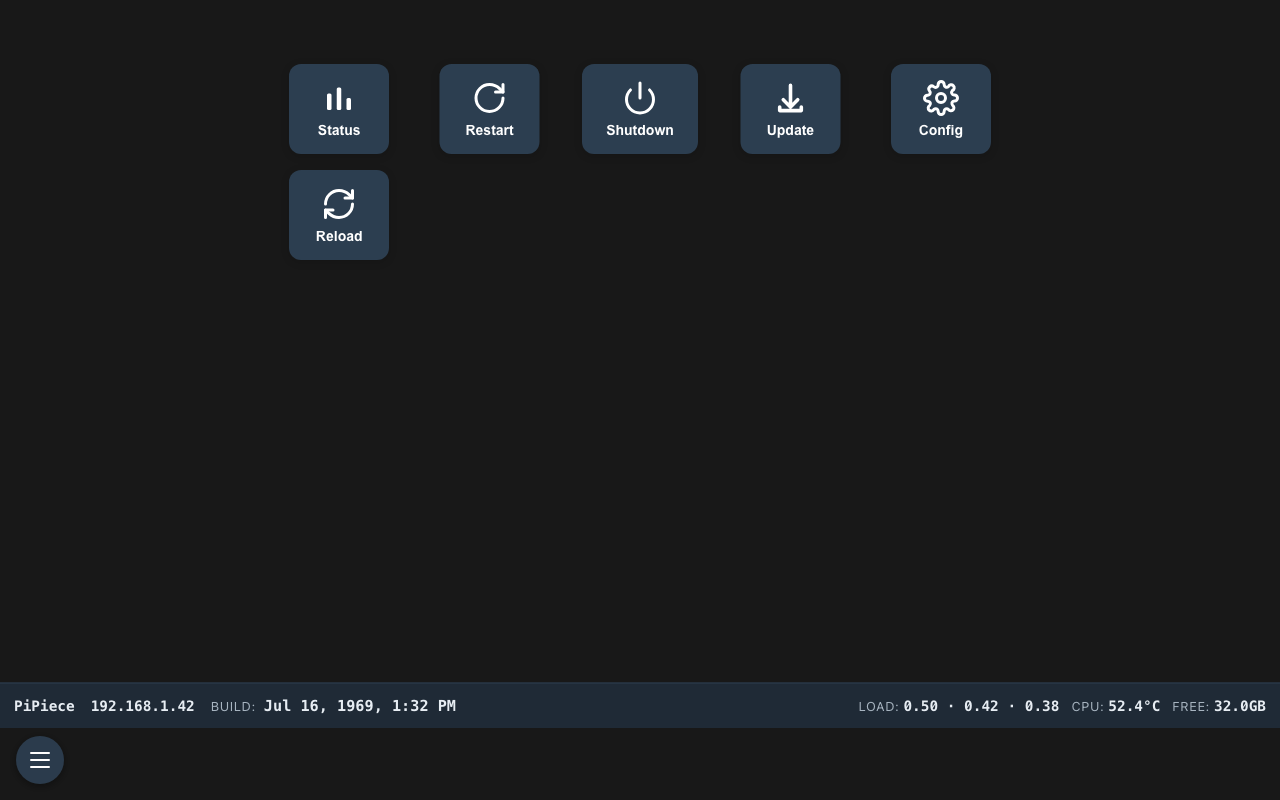

Even in the final, usable form, there are quirks. Look at this screenshot from the HyperPixel 4:

Both overlay panels expanded on the HyperPixel 4 (800×480). The feeding graph's "📊 24h" toggle is at the top of its panel. The histogram's "Hist" toggle, R/G/B stats, and Stretch selector are at the bottom.

The histogram and the feeding activity graph have inconsistent toggle button placement. The feeding graph's toggle ("📊 24h") sits at the top of its panel. The histogram's toggle ("Hist" plus the R/G/B/min badges and stretch selector) sits at the bottom. This happened because the AI built the two components at different times with different DOM ordering. The feeding graph puts the button first (<button> then <div v-if> for the graph body), while the histogram puts the content first (<Transition><div v-if> for the body, then <button>). Both wrappers use flex-direction: column; justify-content: flex-end, which renders them differently depending on source order.

It's a minor inconsistency, but one of those things that's immediately visible the moment you see both panels open together. A reminder that even when AI writes all the code, you still need human eyes on the result.

The Commit

The session ended with:

"commit these changes"

100 files changed. 91 unit tests passing. 95 E2E tests passing across five viewport profiles. The AI produced the full commit message — and this is another place where the instruction files do the work. Both .github/copilot-instructions.md and .claude/commands/commit.md define the same commit format: conventional commits (type(scope): summary), a body that explains why (not what), written "for a future engineer — including yourself — reading this commit months from now to understand why this change existed." The instructions also require drafting a BlueSky post (under 300 chars) and a LinkedIn post (2–3 paragraphs) with every commit, and for large features, suggesting a LinkedIn article outline. That's how this article got started.

Here's the full commit message the AI generated:

feat(ui): Bird Watcher HUD, dark mode, and action bar overlay improvements

Added a persistent Bird Watcher status HUD sidebar to CameraView that

displays running time, detection count, last species, confidence, and seed

level at a glance without opening the settings sheet. The camera image shifts

left (160px on HyperPixel 4, 320px on TouchDisplay2) to use the pillarbox

space that was always present due to object-fit:contain.

The action bar was extended to full viewport width and now houses both the

RGB histogram and feeding activity graph overlays between the hamburger and

stop/settings buttons. Each panel expands in-flow (not absolute-positioned)

so both can be open simultaneously without overlap; when only one is open it

fills the full gap. Toggle buttons are now visually identical across both

panels, active state highlighted in green.

Dark mode is applied unconditionally (removed prefers-color-scheme gate and

WebContentsForceDark workaround). Button sizes increased across system views.

E2E test coverage extended to four viewport profiles (iPhone portrait/landscape,

iPad Pro landscape, TouchDisplay2 landscape) with baseline screenshots. An

overlap detection E2E test is added that verifies no two interactive controls

occupy the same screen space in any view.

Tests: 91/91 unit tests pass; E2E baselines captured for all viewports

Docs: no docs changes requiredWhat Worked, What Didn't

Worked well:

- TDD rhythm. Writing failing tests before implementation kept the scope honest. Each feature had a clear done condition. This isn't something the AI decided to do on its own — the project's instruction files enforce it. The

.github/copilot-instructions.md(loaded automatically by GitHub Copilot) has an explicit workflow: "Red — write failing tests first. Write tests before any implementation code. Run the tests. Show the failure output. Confirm with the user before implementing. Green — implement the minimum. Write only enough code to make the failing tests pass. No extras." The Claude Code equivalent lives in.claude/commands/feature.mdwith the same phased structure: Discovery (ask questions first), Research (read before writing), Red (failing tests), Green (minimum implementation). Both instruction files enforce the same discipline regardless of which AI engine I'm using. The AI follows TDD because I told it to, in a file it reads at the start of every session. - Proactive component extraction. The copilot-instructions also include a "Refactor UI Components" workflow that triggers when the AI encounters a template longer than ~100 lines, multiple visually distinct sections in one file, or tests that are hard to write because the component does too much. The instructions tell the AI to proactively offer: "This view is doing a lot — would you like me to break it into smaller, reusable components?" — and then wait for confirmation. The Claude

.claude/commands/refactor-ui.mdskill has the same logic with a defined component category structure (camera/,capture/,bird/,system/,layout/,controls/,display/). That's how BirdWatcherSettings, PreviewSettings, TimelapseSettings, and the other camera sub-components ended up incomponents/camera/— the AI was following the extraction pattern baked into its instructions. - Plan mode. Both the initial layout redesign and the HUD feature started in plan mode. The AI read the codebase, asked questions, produced architecture diagrams, and I refined the plan before any code was written. This prevented several wrong turns.

- The overlap detector. Genuinely useful, written entirely by the AI from a single prompt. It found real bugs I hadn't noticed.

- Screenshot baselines. Committing E2E screenshots means any future UI drift shows up as a test failure.

Took multiple attempts:

- The hamburger clipping — three attempts before landing on

<Teleport to="body">. - The 60-second auto-close timer test — seven attempts. The AI went through fake timers, spy-based approaches, direct method calls, and finally discovered the root cause was jsdom's

getComputedStylelying about disconnected DOM elements. - Dark mode on the Pi — six-plus rounds of CSS fixes chasing Chromium's GPU-level

WebContentsForceDarkcolor inversion, which reports wrong values to JavaScript debugging tools. The AI ended up removing all light/dark switching and making dark mode unconditional. Not the intended outcome, but functional. - The panel overlap fix — five prompts across multiple approaches, including one moment where the AI got stuck in plan mode. The root cause was zero-height wrappers making

bottom: 100%resolve identically for both panels. - The histogram toggle — two extra rounds after getting it positioned. The first version hid its own close button behind

overflow: hiddenon the parent container.

The real lesson: The AI is remarkably good at producing working code from descriptions. It wrote the overlap detector, the timer tests, the viewport configurations, the HUD layout, the component extraction — all from short prompts. Where it struggles is diagnosing visual bugs on physical hardware, handling browser-specific rendering quirks (especially Chromium's force-dark pipeline), and working through test framework edge cases where the test environment doesn't match the runtime. For those problems, you need a human looking at the screen and saying "that's still wrong."

The AI doesn't get frustrated. But it does go in circles when the debugging tools are lying to it. The dark mode saga was the clearest example: every JavaScript diagnostic said "white" while the screen showed "dark." The AI had no way to know the GPU was inverting colors after CSS was computed. It needed me to keep saying "still broken" until it exhausted the wrong approaches and tried something different.

That's the workflow. Not "AI writes the code and it works." More like "AI writes the code, I test it on real hardware, and we go back and forth until it's right." Sometimes that's one prompt. Sometimes it's seven.

The Repository

The code is on GitLab: https://gitlab.com/johnwebbcole/pipiece-ui

The project site: https://johnwebbcole.gitlab.io/pipiece-ui

91 unit tests. 95 E2E tests across five viewport configurations. The bird watcher runs locally — no cloud, no subscription.

If you're building a touchscreen UI for a Raspberry Pi and hitting similar layout challenges, or if you're experimenting with AI-driven development workflows, I'm happy to talk through what worked.